Introduction

Experiment Tracking Tools are critical components in modern machine learning workflows that help teams log, compare, and manage experiments efficiently. These tools capture parameters, metrics, datasets, code versions, and outputs, enabling teams to reproduce results and continuously improve models. Without proper tracking, experimentation becomes chaotic, leading to lost insights and inconsistent outcomes.

In today’s AI-first environment, where teams run hundreds or even thousands of experiments, structured tracking is no longer optional. With the rise of MLOps, distributed teams, and large-scale model training, these tools ensure transparency, reproducibility, and faster iteration cycles. They also support governance by maintaining a clear history of model development.

Common use cases include:

- Tracking hyperparameters and model performance

- Comparing experiment runs

- Managing dataset and model versions

- Supporting collaboration across teams

- Ensuring reproducibility and auditability

Key evaluation criteria buyers should consider:

- Depth of experiment tracking

- Visualization and comparison capabilities

- Integration with ML frameworks

- Scalability and performance

- Collaboration features

- Version control and lineage tracking

- Security and compliance readiness

- Ease of setup and usability

Best for: Data scientists, ML engineers, research teams, and organizations building scalable machine learning pipelines.

Not ideal for: Teams with minimal experimentation needs or those using static analytics workflows.

Key Trends in Experiment Tracking Tools

- MLOps integration: Native support for CI/CD pipelines and model deployment

- AI-assisted experimentation: Automated suggestions for tuning and optimization

- Collaboration-first platforms: Shared dashboards and experiment logs

- Cloud-native solutions: Managed platforms reducing infrastructure overhead

- Reproducibility focus: Strong versioning and lineage tracking

- Visualization enhancements: Advanced comparison dashboards

- Notebook integration: Seamless support for Jupyter and IDEs

- Scalable experiment storage: Handling large experiment volumes efficiently

How We Evaluated Experiment Tracking Tools (Methodology)

- Market adoption and real-world usage

- Feature completeness and flexibility

- Performance and scalability

- Security and compliance capabilities

- Integration with ML ecosystems

- Ease of onboarding and usability

- Community strength and vendor support

- Cost and deployment flexibility

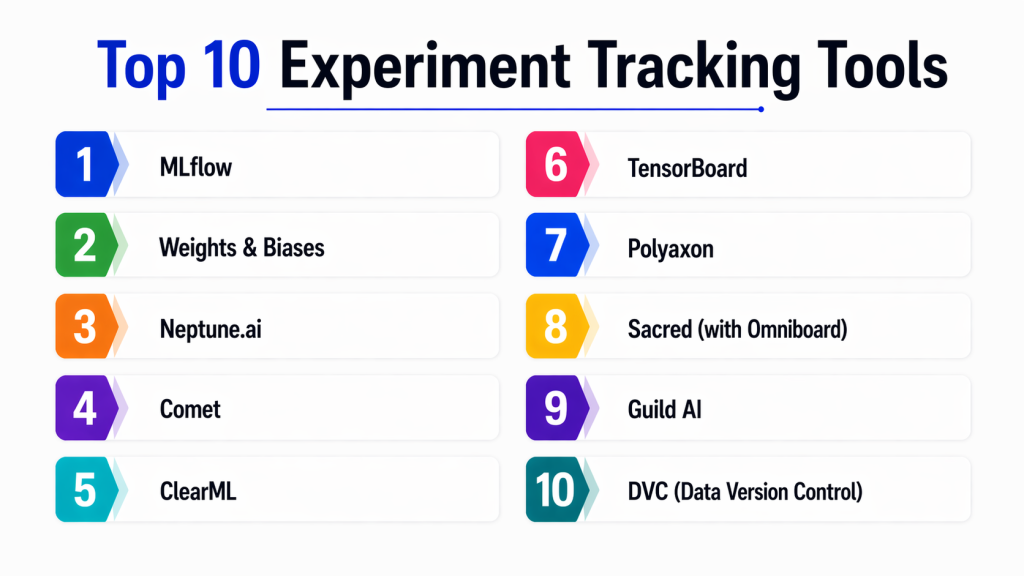

Top 10 Experiment Tracking Tools

#1 — MLflow

Short description:

MLflow is one of the most widely used open-source platforms for managing the machine learning lifecycle. It provides robust experiment tracking, model versioning, and deployment capabilities. MLflow is highly flexible and integrates seamlessly with multiple frameworks, making it suitable for both small teams and large enterprises managing complex ML pipelines.

Key Features

- Experiment tracking and logging

- Model registry and versioning

- Reproducibility tools

- REST API support

- Integration with multiple ML frameworks

- Deployment support

Pros

- Open-source and highly flexible

- Strong ecosystem and adoption

Cons

- Requires setup and infrastructure

- UI is relatively basic

Platforms / Deployment

- Cloud / Self-hosted

Security & Compliance

- RBAC, authentication support

Integrations & Ecosystem

MLflow integrates with a wide range of tools across the ML ecosystem.

- TensorFlow

- PyTorch

- Databricks

- APIs

Support & Community

Very strong open-source community with extensive documentation.

#2 — Weights & Biases (W&B)

Short description:

Weights & Biases is a powerful experiment tracking platform known for its intuitive interface and advanced visualization capabilities. It helps teams track experiments, compare results, and collaborate effectively. It is widely used in both research and production environments.

Key Features

- Experiment tracking

- Advanced visualization dashboards

- Hyperparameter tuning

- Model management

- Collaboration tools

Pros

- Excellent UI and user experience

- Easy to onboard and use

Cons

- Premium features can be expensive

- Internet dependency for full functionality

Platforms / Deployment

- Cloud / Hybrid

Security & Compliance

- Encryption, access control

Integrations & Ecosystem

- TensorFlow

- PyTorch

- Keras

- APIs

Support & Community

Strong community with enterprise support options.

#3 — Neptune.ai

Short description:

Neptune.ai is a metadata store designed for tracking and organizing machine learning experiments at scale. It enables teams to log and compare experiments efficiently, making it ideal for complex workflows.

Key Features

- Experiment metadata tracking

- Visualization tools

- Collaboration features

- Model monitoring support

- API integration

Pros

- Highly scalable

- Flexible experiment tracking

Cons

- Setup effort required

- Pricing varies

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC

Integrations & Ecosystem

- Python

- ML frameworks

- APIs

Support & Community

Growing adoption with solid documentation.

#4 — Comet

Short description:

Comet is an experiment tracking platform that provides tools for logging, comparing, and optimizing machine learning models. It supports visualization and collaboration features for teams.

Key Features

- Experiment tracking

- Visualization dashboards

- Model registry

- Collaboration tools

- Hyperparameter optimization

Pros

- Strong visualization tools

- Easy integration with ML frameworks

Cons

- Paid plans for advanced features

- Slight learning curve

Platforms / Deployment

- Cloud / On-premise

Security & Compliance

- Encryption, access control

Integrations & Ecosystem

- ML frameworks

- APIs

- Notebooks

Support & Community

Reliable support with good documentation.

#5 — ClearML

Short description:

ClearML is an open-source MLOps platform that includes experiment tracking, orchestration, and automation features. It provides a complete environment for managing ML workflows.

Key Features

- Experiment tracking

- Pipeline orchestration

- Model management

- Visualization tools

- Automation

Pros

- End-to-end MLOps capabilities

- Open-source flexibility

Cons

- Requires setup and maintenance

- UI complexity

Platforms / Deployment

- Cloud / Self-hosted

Security & Compliance

- RBAC, authentication

Integrations & Ecosystem

- Python

- ML frameworks

- DevOps tools

Support & Community

Active open-source community.

#6 — TensorBoard

Short description:

TensorBoard is a visualization tool primarily used with TensorFlow for tracking experiments and monitoring training processes. It provides insights into model performance through visual dashboards.

Key Features

- Metric visualization

- Graph visualization

- Performance monitoring

- Integration with TensorFlow

- Logging tools

Pros

- Free and easy to use

- Strong visualization capabilities

Cons

- Limited to TensorFlow ecosystem

- Not a full experiment tracking platform

Platforms / Deployment

- Web / Local

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- TensorFlow

- Python

Support & Community

Large and active community.

#7 — Polyaxon

Short description:

Polyaxon is an MLOps platform that offers experiment tracking, orchestration, and automation with strong integration into DevOps workflows.

Key Features

- Experiment tracking

- Pipeline orchestration

- Kubernetes support

- Automation

- Model management

Pros

- Scalable and DevOps-friendly

- Strong automation capabilities

Cons

- Complex setup

- Requires Kubernetes expertise

Platforms / Deployment

- Cloud / Self-hosted

Security & Compliance

- RBAC, authentication

Integrations & Ecosystem

- Kubernetes

- ML frameworks

- APIs

Support & Community

Growing ecosystem and support.

#8 — Sacred + Omniboard

Short description:

Sacred combined with Omniboard provides a lightweight experiment tracking solution suitable for research workflows and smaller teams.

Key Features

- Experiment configuration tracking

- Logging

- Visualization

- Lightweight architecture

- Python-based

Pros

- Simple and flexible

- Good for research use cases

Cons

- Limited enterprise features

- Smaller ecosystem

Platforms / Deployment

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python

- ML workflows

Support & Community

Moderate community support.

#9 — Guild AI

Short description:

Guild AI is an open-source tool focused on experiment tracking and reproducibility. It provides CLI-based workflows for managing experiments.

Key Features

- Experiment tracking

- Reproducibility tools

- CLI interface

- Visualization

- Model comparison

Pros

- Lightweight and simple

- Open-source

Cons

- Limited UI

- Smaller ecosystem

Platforms / Deployment

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python

- ML frameworks

Support & Community

Moderate support.

#10 — DVC (Data Version Control)

Short description:

DVC is a version control system for data and experiments that integrates with Git, enabling reproducibility and collaboration in ML projects.

Key Features

- Data versioning

- Experiment tracking

- Pipeline management

- Integration with Git

- Reproducibility

Pros

- Strong version control integration

- Reliable for reproducibility

Cons

- CLI-heavy

- Learning curve

Platforms / Deployment

- Cross-platform / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Git

- Python

- ML pipelines

Support & Community

Strong open-source community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| MLflow | Open-source tracking | Web | Hybrid | Model registry | N/A |

| W&B | Visualization | Web | Cloud | Dashboards | N/A |

| Neptune.ai | Metadata tracking | Web | Cloud | Experiment store | N/A |

| Comet | Model tracking | Web | Hybrid | Visualization | N/A |

| ClearML | MLOps | Web | Hybrid | Pipeline automation | N/A |

| TensorBoard | Visualization | Web | Local | Graphs | N/A |

| Polyaxon | DevOps ML | Web | Hybrid | Kubernetes support | N/A |

| Sacred | Research | Python | Self-hosted | Lightweight | N/A |

| Guild AI | Reproducibility | CLI | Self-hosted | Simplicity | N/A |

| DVC | Version control | CLI | Self-hosted | Data versioning | N/A |

Evaluation & Scoring of Experiment Tracking Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| MLflow | 9 | 7 | 9 | 8 | 8 | 9 | 9 | 8.6 |

| Weights & Biases | 9 | 9 | 8 | 8 | 8 | 9 | 7 | 8.5 |

| Neptune.ai | 8 | 8 | 8 | 8 | 8 | 8 | 7 | 8.0 |

| Comet | 8 | 8 | 8 | 8 | 7 | 8 | 7 | 7.9 |

| ClearML | 8 | 7 | 8 | 7 | 8 | 8 | 8 | 7.9 |

| TensorBoard | 7 | 9 | 6 | 6 | 7 | 7 | 9 | 7.5 |

| Polyaxon | 8 | 6 | 8 | 8 | 8 | 7 | 7 | 7.6 |

| Sacred | 6 | 7 | 6 | 6 | 6 | 6 | 8 | 6.5 |

| Guild AI | 6 | 7 | 6 | 6 | 6 | 6 | 8 | 6.5 |

| DVC | 8 | 6 | 8 | 7 | 7 | 8 | 9 | 7.8 |

How to interpret scores:

These scores are relative comparisons based on weighted criteria. A higher score indicates a more balanced tool, but the right choice depends on your team’s workflow, scale, and integration needs. Always evaluate tools in real scenarios before finalizing.

Which Experiment Tracking Tool Is Right for You?

Solo / Freelancer

- DVC, Guild AI

SMB

- MLflow, Neptune.ai

Mid-Market

- Weights & Biases, Comet

Enterprise

- ClearML, Polyaxon

Budget vs Premium

- Budget: MLflow, DVC

- Premium: Weights & Biases, Comet

Feature Depth vs Ease of Use

- Deep features: MLflow, ClearML

- Easy to use: Weights & Biases, TensorBoard

Integrations & Scalability

- Best integrations: MLflow, Weights & Biases

Security & Compliance Needs

- Strongest: Comet, ClearML

Frequently Asked Questions (FAQs)

1. What is an experiment tracking tool?

Experiment tracking tools log parameters, metrics, and results of machine learning experiments. They help teams compare different runs, understand performance variations, and maintain a history of experiments for future reference. This improves reproducibility and decision-making.

2. Why are experiment tracking tools important?

They prevent loss of insights and reduce duplication of work. By maintaining a structured record of experiments, teams can easily identify what works and what doesn’t. This accelerates model development and improves collaboration.

3. Are these tools only for data scientists?

Primarily yes, but ML engineers and MLOps teams also use them extensively. They provide visibility into model performance and help manage the entire ML lifecycle effectively.

4. Can these tools integrate with ML frameworks?

Yes, most tools integrate with popular frameworks like TensorFlow, PyTorch, and scikit-learn. This allows seamless tracking without major workflow changes.

5. Are open-source tools reliable?

Open-source tools like MLflow and DVC are widely adopted and reliable. However, they may require additional setup and maintenance compared to managed platforms.

6. What is reproducibility in machine learning?

Reproducibility means being able to recreate the same experiment results using the same data and parameters. It is essential for debugging, validation, and collaboration.

7. How do these tools support collaboration?

They provide shared dashboards, logs, and experiment histories. This allows teams to work together efficiently and make informed decisions based on past experiments.

8. What are common mistakes to avoid?

Common mistakes include not logging enough details, ignoring version control, and failing to standardize workflows. Proper tracking ensures better results and efficiency.

9. Do these tools support large-scale experiments?

Yes, many tools are designed to handle large-scale experiments and distributed computing environments. They can manage thousands of experiment runs efficiently.

10. How do I choose the right tool?

Choose based on your team size, workflow complexity, integration needs, and budget. Testing a few tools in real-world scenarios is the best way to find the right fit.

Conclusion

Experiment tracking tools have become a foundational part of modern machine learning workflows, enabling teams to manage experiments systematically and improve model performance over time. As organizations scale their AI initiatives, these tools ensure transparency, reproducibility, and collaboration across teams. Whether you are working on small research projects or enterprise-scale ML systems, having a reliable tracking mechanism is essential.

The best tool depends on your specific requirements, including ease of use, integration capabilities, and scalability. Open-source tools like MLflow and DVC offer flexibility and cost advantages, while platforms like Weights & Biases and Comet provide advanced visualization and collaboration features. A practical approach is to shortlist a few tools, run pilot experiments, and validate how well they align with your workflows before making a final decision.