Introduction

Data observability tools help organizations monitor, detect, troubleshoot, and improve the health of their data systems. In simple terms, these platforms provide visibility into data pipelines, data quality, schema changes, freshness, lineage, and anomalies—so teams can trust their data and quickly resolve issues before they impact business decisions. Instead of reacting to broken dashboards or incorrect reports, data observability enables proactive monitoring and faster root-cause analysis.

This category has become critical because modern data stacks are complex, distributed, and constantly changing. Data flows across warehouses, lakes, ETL pipelines, APIs, SaaS tools, and real-time systems. Without observability, small issues like schema drift or delayed ingestion can cascade into major business problems. These tools support data reliability, analytics accuracy, compliance, and AI readiness, making them essential for data-driven organizations.

Common use cases include:

- Monitoring data freshness and pipeline reliability

- Detecting anomalies in datasets and metrics

- Identifying schema changes and pipeline failures

- Root-cause analysis using lineage tracking

- Ensuring data quality and trust across teams

Buyers should evaluate:

- Anomaly detection capabilities

- Data lineage and dependency mapping

- Alerting and incident management

- Integration with data stack tools

- Ease of setup and maintenance

- Scalability across pipelines and datasets

- Automation and AI capabilities

- Governance and security features

- Cost and pricing model

- Support and documentation

Best for: data engineers, analytics engineers, data platform teams, and organizations with complex data pipelines. Especially valuable for mid-market and enterprise environments where data reliability is critical.

Not ideal for: small teams with simple pipelines or minimal data infrastructure. If your data environment is straightforward, basic monitoring may be sufficient.

Key Trends in Data Observability Tools

- AI-driven anomaly detection is becoming standard

- Shift-left data quality monitoring is increasing

- Integration with data catalogs and governance tools is growing

- Real-time observability is gaining importance

- Column-level lineage is becoming more common

- Automated root-cause analysis is improving

- Cloud-native observability platforms dominate new deployments

- Data reliability engineering is emerging as a discipline

- Integration with DevOps and incident management tools is increasing

- Focus on cost monitoring and efficiency is rising

How We Chose These Data Observability Tools (Methodology)

We selected the Top 10 tools based on:

- Market adoption and category leadership

- Data monitoring and anomaly detection capabilities

- Lineage and root-cause analysis features

- Integration ecosystem and compatibility

- Ease of use and implementation

- Security and governance readiness

- Scalability across large data environments

- Innovation in AI and automation

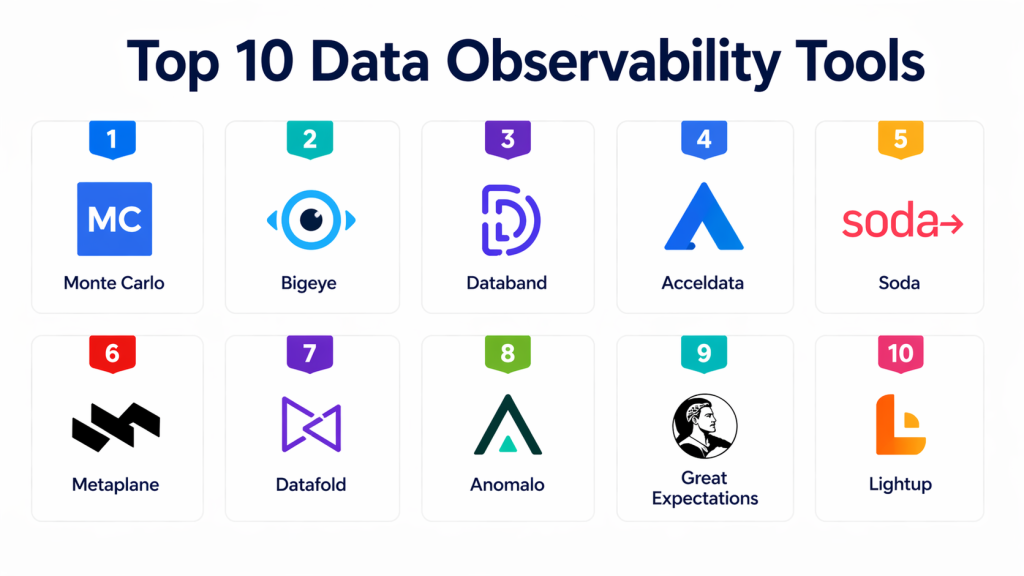

Top 10 Data Observability Tools

#1 — Monte Carlo

Short description : Monte Carlo is one of the most recognized data observability platforms. It provides end-to-end visibility into data pipelines and detects anomalies across datasets. The platform focuses on data reliability and reducing downtime. It is widely used by enterprises to ensure data trust. Monte Carlo is best suited for large-scale data environments.

Key Features

- Automated anomaly detection

- Data lineage tracking

- Data freshness monitoring

- Incident alerting

- Root-cause analysis

- Integration with modern data stacks

Pros

- Strong data reliability focus

- Enterprise-grade features

- Mature platform

Cons

- Premium pricing

- Complex setup

- Requires tuning

Platforms / Deployment

Cloud

Security & Compliance

Supports enterprise security and governance controls.

Integrations & Ecosystem

Integrates with warehouses, pipelines, and BI tools.

Support & Community

Strong enterprise support.

#2 — Bigeye

Short description : Bigeye is a modern data observability platform focused on monitoring data quality and pipeline health. It provides automated metrics and anomaly detection. Bigeye is known for its ease of use and fast setup. It is suitable for growing data teams. A strong competitor in the observability space.

Key Features

- Data quality monitoring

- Automated metrics tracking

- Anomaly detection

- Pipeline observability

- Alerting and notifications

- Integration with data tools

Pros

- Easy to use

- Fast deployment

- Good for mid-market teams

Cons

- Limited enterprise depth

- Smaller ecosystem

- Feature maturity evolving

Platforms / Deployment

Cloud

Security & Compliance

Supports modern security controls.

Integrations & Ecosystem

Works with modern data stacks.

Support & Community

Growing adoption.

#3 — Databand

Short description : Databand is a data observability tool focused on pipeline monitoring and orchestration visibility. It helps teams detect failures and troubleshoot issues quickly. Databand integrates well with orchestration tools. It is especially useful for data engineering teams. A strong option for pipeline-focused observability.

Key Features

- Pipeline monitoring

- Incident detection

- Workflow tracking

- Integration with orchestration tools

- Alerting system

- Data reliability tracking

Pros

- Strong pipeline monitoring

- Good for engineering teams

- Integration with orchestration tools

Cons

- Less focus on business metrics

- UI could improve

- Requires configuration

Platforms / Deployment

Cloud

Security & Compliance

Supports enterprise security features.

Integrations & Ecosystem

Works with orchestration and pipeline tools.

Support & Community

Enterprise support available.

#4 — Acceldata

Short description : Acceldata provides observability across data pipelines, infrastructure, and workloads. It focuses on performance monitoring and data reliability. The platform is suitable for large enterprises. It offers deep insights into data systems. A strong enterprise observability solution.

Key Features

- Data pipeline monitoring

- Infrastructure observability

- Performance analytics

- Data quality monitoring

- Alerting system

- Root-cause analysis

Pros

- Comprehensive observability

- Strong enterprise features

- Good performance insights

Cons

- Complex setup

- Higher cost

- Requires expertise

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Supports enterprise governance controls.

Integrations & Ecosystem

Integrates with enterprise data systems.

Support & Community

Enterprise-level support.

#5 — Soda

Short description : Soda is a data quality and observability platform designed for monitoring data reliability. It supports both cloud and open-source deployment. Soda is known for its flexibility and developer-friendly approach. It is suitable for teams that want customizable observability. A strong option for modern data teams.

Key Features

- Data quality checks

- Anomaly detection

- Open-source components

- Pipeline monitoring

- Custom rule creation

- Integration support

Pros

- Flexible and customizable

- Open-source option

- Developer-friendly

Cons

- Requires setup effort

- Less enterprise polish

- Limited UI features

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Depends on deployment model.

Integrations & Ecosystem

Works with modern data tools.

Support & Community

Strong open-source community.

#6 — Metaplane

Short description : Metaplane is a data observability platform focused on monitoring data quality and detecting anomalies. It uses machine learning to identify issues. Metaplane is designed for modern data teams. It is easy to integrate and use. A strong choice for growing organizations.

Key Features

- ML-based anomaly detection

- Data quality monitoring

- Pipeline observability

- Alerting system

- Integration with data tools

- Easy setup

Pros

- Simple to use

- ML-driven insights

- Good for modern teams

Cons

- Smaller platform

- Limited enterprise features

- Growing ecosystem

Platforms / Deployment

Cloud

Security & Compliance

Supports modern security controls.

Integrations & Ecosystem

Works with data pipelines and warehouses.

Support & Community

Growing adoption.

#7 — Datafold

Short description : Datafold focuses on data quality and testing rather than full observability. It helps detect data changes and issues before deployment. Datafold is popular among data engineering teams. It is especially useful for CI/CD workflows. A strong complementary tool.

Key Features

- Data diffing

- Data testing

- Pipeline validation

- CI/CD integration

- Change detection

- Data quality monitoring

Pros

- Strong testing capabilities

- Useful for CI/CD

- Developer-friendly

Cons

- Not full observability platform

- Limited monitoring features

- Narrow focus

Platforms / Deployment

Cloud

Security & Compliance

Supports standard security practices.

Integrations & Ecosystem

Works with CI/CD and data tools.

Support & Community

Active user base.

#8 — Anomalo

Short description : Anomalo is a data quality monitoring platform focused on anomaly detection. It uses machine learning to identify data issues. Anomalo is designed for enterprise use. It helps ensure data reliability. A strong option for anomaly detection.

Key Features

- ML-based anomaly detection

- Data quality monitoring

- Automated alerts

- Integration with data tools

- Data validation

- Reporting features

Pros

- Strong anomaly detection

- Enterprise focus

- Easy integration

Cons

- Limited broader observability

- Premium pricing

- Smaller ecosystem

Platforms / Deployment

Cloud

Security & Compliance

Supports enterprise security controls.

Integrations & Ecosystem

Works with modern data platforms.

Support & Community

Enterprise support available.

#9 — Great Expectations

Short description : Great Expectations is an open-source data quality framework. It helps teams define, test, and validate data. It is widely used in data engineering workflows. It is flexible and customizable. A popular open-source option.

Key Features

- Data validation

- Testing framework

- Open-source

- Custom rules

- Integration support

- Documentation generation

Pros

- Highly flexible

- Open-source

- Strong community

Cons

- Requires setup

- Not full observability

- Limited UI

Platforms / Deployment

Self-hosted

Security & Compliance

Depends on deployment.

Integrations & Ecosystem

Works with data pipelines.

Support & Community

Strong open-source community.

#10 — Lightup

Short description : Lightup is a data observability platform focused on monitoring data quality and reliability. It provides real-time insights into data systems. Lightup is designed for modern data environments. It helps teams detect and resolve issues quickly. A growing player in the space.

Key Features

- Real-time monitoring

- Data quality tracking

- Anomaly detection

- Alerting system

- Integration support

- Observability dashboards

Pros

- Real-time insights

- Easy to use

- Modern platform

Cons

- Smaller ecosystem

- Limited enterprise maturity

- Feature depth evolving

Platforms / Deployment

Cloud

Security & Compliance

Supports standard security controls.

Integrations & Ecosystem

Works with modern data tools.

Support & Community

Growing adoption.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Monte Carlo | Enterprise observability | Web / Cloud | Cloud | End-to-end data monitoring | N/A |

| Bigeye | Mid-market monitoring | Web / Cloud | Cloud | Easy setup | N/A |

| Databand | Pipeline monitoring | Web / Cloud | Cloud | Workflow tracking | N/A |

| Acceldata | Enterprise performance monitoring | Web / Cloud | Cloud / Hybrid | Infrastructure observability | N/A |

| Soda | Flexible observability | Web / Cloud | Cloud / Self-hosted | Open-source support | N/A |

| Metaplane | ML anomaly detection | Web / Cloud | Cloud | ML-driven monitoring | N/A |

| Datafold | Data testing | Web / Cloud | Cloud | Data diffing | N/A |

| Anomalo | Data quality monitoring | Web / Cloud | Cloud | Anomaly detection | N/A |

| Great Expectations | Open-source validation | Web | Self-hosted | Data testing framework | N/A |

| Lightup | Real-time monitoring | Web / Cloud | Cloud | Real-time insights | N/A |

Evaluation & Scoring of Data Observability Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Total |

|---|---|---|---|---|---|---|---|---|

| Monte Carlo | 9.5 | 8.0 | 9.0 | 9.2 | 9.0 | 9.0 | 7.5 | 8.87 |

| Bigeye | 8.5 | 9.0 | 8.5 | 8.5 | 8.5 | 8.5 | 8.5 | 8.57 |

| Databand | 8.7 | 8.0 | 8.6 | 8.6 | 8.7 | 8.6 | 8.2 | 8.49 |

| Acceldata | 9.0 | 7.0 | 8.5 | 9.0 | 9.0 | 8.8 | 7.8 | 8.44 |

| Soda | 8.3 | 7.8 | 8.4 | 8.2 | 8.3 | 8.0 | 8.8 | 8.26 |

| Metaplane | 8.4 | 8.8 | 8.2 | 8.3 | 8.4 | 8.2 | 8.5 | 8.38 |

| Datafold | 7.8 | 8.2 | 8.3 | 8.0 | 8.2 | 8.1 | 8.4 | 8.10 |

| Anomalo | 8.5 | 8.3 | 8.2 | 8.6 | 8.4 | 8.3 | 7.8 | 8.30 |

| Great Expectations | 7.9 | 7.0 | 8.0 | 7.8 | 8.0 | 8.0 | 9.0 | 8.00 |

| Lightup | 8.2 | 8.5 | 8.0 | 8.1 | 8.3 | 8.0 | 8.3 | 8.20 |

Scores are comparative and should guide evaluation decisions.

Which Data Observability Tool Is Right for You?

Solo / Freelancer

Use open-source tools like Great Expectations.

SMB

Bigeye or Metaplane are good options.

Mid-Market

Soda, Metaplane, or Databand work well.

Enterprise

Monte Carlo, Acceldata, and Anomalo are top choices.

Budget vs Premium

Open-source vs enterprise platforms.

Feature Depth vs Ease of Use

Monte Carlo = depth

Bigeye = ease

Soda = flexibility

Integrations & Scalability

Choose tools compatible with your data stack.

Security & Compliance Needs

Enterprise tools offer stronger controls.

Frequently Asked Questions (FAQs)

1. What is data observability?

Data observability refers to the ability to monitor, track, and understand the health of data systems across pipelines, datasets, and analytics layers. It combines metrics like freshness, volume, schema, and lineage to detect issues early. This helps teams identify problems before they impact reports or business decisions. It is a proactive approach rather than reactive troubleshooting. Modern observability tools automate much of this process using AI and metadata.

2. Why is data observability important?

Data observability ensures that data used for analytics, reporting, and AI is reliable and accurate. Without it, issues like broken pipelines or incorrect data can go unnoticed and lead to poor decisions. It reduces downtime and improves trust in data systems. It also helps teams debug problems faster using lineage and root-cause analysis. Overall, it is critical for maintaining data quality at scale.

3. Who uses data observability tools?

These tools are primarily used by data engineers, analytics engineers, and data platform teams. They help monitor pipelines, ensure data quality, and maintain system reliability. Data analysts and business teams also benefit indirectly by receiving more accurate and consistent data. In larger organizations, data reliability engineers may specifically manage observability. It is a cross-functional capability supporting the entire data ecosystem.

4. Are data observability tools cloud-based?

Most modern data observability tools are cloud-based, offering scalability and easier integration with cloud data platforms. However, some tools also provide hybrid or self-hosted deployment options for organizations with strict compliance or security requirements. Cloud-based tools are easier to deploy and maintain. They also support real-time monitoring and distributed data systems. The choice depends on infrastructure and governance needs.

5. What is anomaly detection in data observability?

Anomaly detection identifies unusual patterns or unexpected changes in data, such as sudden drops in volume or spikes in values. These anomalies often indicate underlying issues like pipeline failures or incorrect transformations. Modern tools use machine learning to detect anomalies automatically. This reduces the need for manual monitoring and improves detection speed. It is a core feature of most observability platforms.

6. Do data observability tools support real-time monitoring?

Many modern tools support real-time or near real-time monitoring, depending on the architecture and data pipelines. Real-time capabilities are important for use cases like streaming data and operational analytics. However, not all organizations require real-time monitoring, as batch processing is still common. Buyers should evaluate latency requirements carefully. Real-time features may also impact cost and complexity.

7. Are data observability tools expensive?

Pricing varies widely depending on the vendor, scale of data, and feature set. Enterprise-grade platforms can be expensive due to advanced capabilities and scalability. However, there are also open-source and mid-market options that offer more affordable entry points. Organizations should consider total cost of ownership, including setup and maintenance. The investment is often justified by reduced downtime and improved data reliability.

8. Can these tools integrate with data pipelines?

Yes, most data observability tools integrate with modern data pipelines, ETL/ELT tools, and orchestration systems. This integration allows them to monitor data flow and detect issues at different stages. They also connect with warehouses and BI tools to provide end-to-end visibility. Integration is a key factor when selecting a tool. Compatibility with your existing stack is essential.

9. Is setup complex for data observability tools?

Setup complexity depends on the tool and the organization’s data architecture. Some modern tools offer quick deployment with minimal configuration, while others require deeper setup and tuning. Enterprise environments may need more customization and governance setup. A phased implementation approach is often recommended. Proper onboarding and training can significantly reduce complexity.

10. Do data observability tools improve data quality?

Yes, data observability tools directly improve data quality by detecting issues early and enabling faster resolution. They provide insights into anomalies, schema changes, and pipeline failures. This helps teams maintain clean and reliable datasets. Over time, observability also helps enforce better data practices. It is a key component of a strong data quality strategy.

Conclusion

Data observability tools have become a critical layer in modern data architectures, helping organizations ensure that their data pipelines, datasets, and analytics outputs remain reliable and trustworthy. As data environments grow more complex with multiple sources, transformations, and real-time workflows, the risk of silent failures increases. Observability platforms address this challenge by providing continuous monitoring, anomaly detection, and deep visibility into data health. This not only reduces downtime but also builds confidence in business decisions driven by data.

The right tool depends on your organization’s scale, technical maturity, and specific use cases. Enterprise platforms like Monte Carlo and Acceldata offer deep capabilities for large environments, while tools like Bigeye, Metaplane, and Soda provide more accessible options for growing teams. Open-source solutions like Great Expectations are ideal for engineering-led approaches. Instead of choosing a tool based only on features, focus on how well it integrates with your existing stack and supports your data reliability goals. Start with a pilot, validate real-world performance, and scale gradually to build a resilient and trustworthy data ecosystem.