Introduction

Synthetic Data Generation Tools are platforms that create artificially generated data that mimics real-world datasets while preserving statistical properties. These tools are widely used in AI, machine learning, cybersecurity, and analytics to overcome challenges like data privacy, limited datasets, and regulatory restrictions.

In the modern AI ecosystem, synthetic data has become essential for building scalable and privacy-safe machine learning systems. Organizations use these tools to train models, test systems, simulate environments, and improve data diversity without exposing sensitive information.

These platforms are also tightly aligned with Identity Management, Cybersecurity, Zero Trust architectures, and Access Control systems, ensuring compliance and safe AI development.

Real-world use cases include:

- Training machine learning models without real customer data

- Testing fraud detection and cybersecurity systems

- Simulating financial transactions for risk modeling

- Generating healthcare datasets for research

- Enhancing AI model robustness with diverse datasets

What buyers should evaluate:

- Data realism and statistical accuracy

- Privacy preservation capabilities

- Scalability and performance

- Integration with ML pipelines

- Support for structured and unstructured data

- Compliance with data regulations

- Ease of use and automation features

- Deployment flexibility (cloud/on-premise)

Best for: Data scientists, AI researchers, cybersecurity teams, healthcare organizations, fintech companies, and enterprises dealing with sensitive data.

Not ideal for: Simple analytics tasks or teams that already have large, high-quality real datasets.

Key Trends in Synthetic Data Generation Tools

- AI-powered data generation using deep learning models

- Privacy-first synthetic data for regulatory compliance

- Integration with MLOps and data pipelines

- Support for multimodal data (text, images, tabular, time-series)

- Real-time synthetic data generation for simulations

- Zero Trust and privacy-preserving AI architectures

- Automated data augmentation for training ML models

- Cloud-native synthetic data platforms gaining adoption

- Synthetic data validation and quality scoring systems

- Growing use in cybersecurity and fraud simulation

How We Synthetic Data Generation Tools (Methodology)

We evaluated tools based on:

- Data quality and realism

- Privacy preservation mechanisms

- Scalability and performance

- Integration with AI/ML ecosystems

- Ease of use and automation capabilities

- Security and compliance support

- Deployment flexibility

- Market adoption and maturity

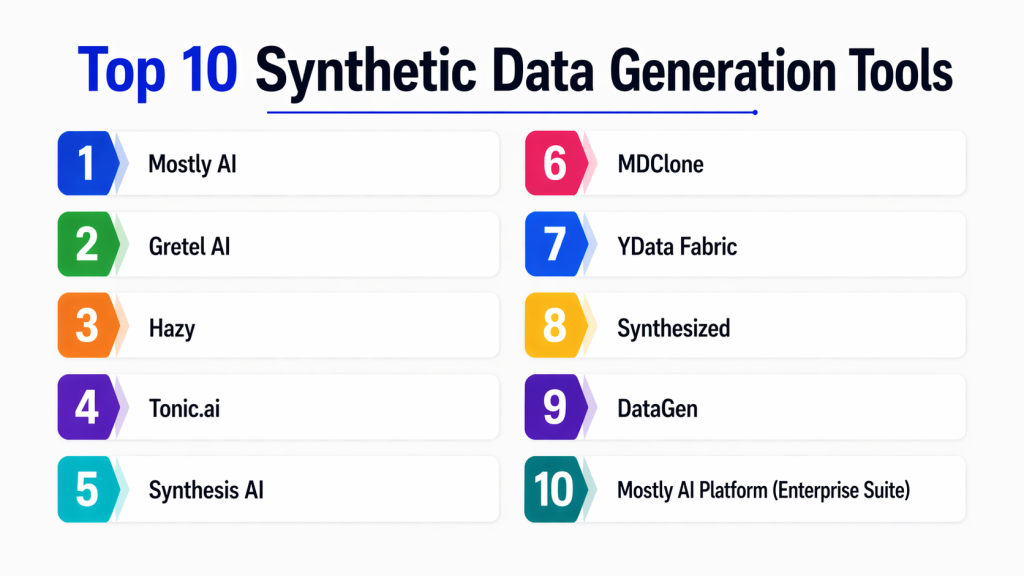

Top 10 Synthetic Data Generation Tools

#1 — Mostly AI

Short description :

Mostly AI is a leading synthetic data platform focused on privacy-preserving data generation. It uses AI models to create realistic tabular data. Ideal for enterprises handling sensitive customer data. It ensures GDPR-compliant synthetic datasets. Widely used in finance and insurance industries.

Key Features

- AI-driven synthetic data generation

- Privacy preservation models

- Tabular data support

- Data quality validation

- Enterprise integrations

- API-based automation

Pros

- High data realism

- Strong privacy protection

Cons

- Enterprise pricing

- Limited free usage

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

GDPR compliance, data anonymization

Compliance: Varies

Integrations & Ecosystem

- Data warehouses

- ML pipelines

- APIs

Support & Community

Enterprise-grade support.

#2 — Gretel AI

Short description :

Gretel AI is a synthetic data platform designed for developers and data scientists. It generates safe, privacy-preserving datasets using AI models. Supports structured and unstructured data. Ideal for ML training and testing. Focuses on automation and scalability.

Key Features

- Synthetic data APIs

- Data anonymization

- Multi-format support

- Model training tools

- Data validation

Pros

- Developer-friendly

- Fast integration

Cons

- Requires setup for advanced use

- Limited offline support

Platforms / Deployment

Cloud

Security & Compliance

Privacy-preserving AI models

Compliance: Not publicly stated

Integrations & Ecosystem

- ML frameworks

- Data pipelines

- APIs

Support & Community

Strong developer community.

#3 — Hazy

Short description :

Hazy is an enterprise synthetic data platform focused on regulated industries. It generates high-quality synthetic datasets for secure AI development. Ideal for banking and healthcare. Ensures strong privacy guarantees. Designed for enterprise-scale use.

Key Features

- AI-based synthetic data generation

- Enterprise governance

- Structured data support

- Data anonymization

- Compliance tools

Pros

- Strong enterprise focus

- High data accuracy

Cons

- Expensive

- Limited small-team use

Platforms / Deployment

Cloud / On-premise

Security & Compliance

GDPR, privacy controls

Compliance: Enterprise-grade

Integrations & Ecosystem

- Data platforms

- ML systems

Support & Community

Enterprise support.

#4 — Tonic.ai

Short description :

Tonic.ai is a synthetic data platform that creates realistic datasets for development and testing. It focuses on privacy-safe data generation. Widely used in software engineering and QA environments. Helps replace sensitive production data. Ideal for secure development workflows.

Key Features

- Data masking and generation

- Structured data support

- API-driven workflows

- Database integration

- Privacy controls

Pros

- Easy to use

- Strong privacy features

Cons

- Limited advanced AI features

- Enterprise pricing

Platforms / Deployment

Cloud / On-premise

Security & Compliance

Data anonymization, encryption

Compliance: Varies

Integrations & Ecosystem

- Databases

- Dev tools

Support & Community

Strong enterprise support.

#5 — Synthesis AI

Short description :

Synthesis AI specializes in generating synthetic visual data for computer vision applications. It creates high-quality synthetic images and videos. Ideal for robotics and autonomous systems. Helps train AI models without real-world data dependency. Focused on visual AI.

Key Features

- Synthetic image generation

- 3D environment simulation

- Computer vision support

- Annotation tools

- Dataset generation

Pros

- Excellent for vision AI

- High realism

Cons

- Narrow use case

- Limited tabular support

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- CV frameworks

- AI pipelines

Support & Community

Specialized support.

#6 — DataGen

Short description :

DataGen is a synthetic data platform focused on enterprise analytics and ML workflows. It generates structured datasets for training AI models. Designed for scalability and governance. Used in finance and healthcare industries. Focuses on compliance.

Key Features

- Synthetic tabular data

- Data governance tools

- Privacy preservation

- ML-ready datasets

Pros

- Enterprise-ready

- Strong compliance

Cons

- Limited community

- Complex setup

Platforms / Deployment

Cloud

Security & Compliance

Privacy-focused architecture

Compliance: Varies

Integrations & Ecosystem

- Data warehouses

- ML tools

Support & Community

Enterprise support.

#7 — MDClone

Short description :

MDClone is a healthcare-focused synthetic data platform. It generates anonymized datasets for medical research. Widely used in hospitals and research institutions. Ensures strict privacy compliance. Ideal for healthcare analytics.

Key Features

- Healthcare synthetic data

- Privacy preservation

- Data exploration tools

- Secure analytics

Pros

- Strong healthcare focus

- High compliance

Cons

- Industry-specific

- Limited general use

Platforms / Deployment

Cloud / On-premise

Security & Compliance

HIPAA, GDPR compliance (varies)

Integrations & Ecosystem

- Healthcare systems

- Analytics tools

Support & Community

Enterprise healthcare support.

#8 — Mostly AI Synthetic Data Platform

Short description :

This platform focuses on generating enterprise-grade synthetic datasets. It ensures statistical accuracy and privacy protection. Designed for large organizations. Used in financial services and insurance. Supports scalable data pipelines.

Key Features

- AI-based data generation

- Privacy protection

- Enterprise APIs

- Data validation

Pros

- High-quality outputs

- Secure

Cons

- Costly

- Enterprise-focused

Platforms / Deployment

Cloud

Security & Compliance

Privacy-first design

Compliance: Enterprise-grade

Integrations & Ecosystem

- ML pipelines

- Data platforms

Support & Community

Enterprise support.

#9 — YData Fabric

Short description :

YData Fabric is a data-centric AI platform that includes synthetic data generation capabilities. It helps improve data quality for ML models. Focuses on data privacy and augmentation. Ideal for AI pipelines.

Key Features

- Synthetic data generation

- Data quality tools

- ML pipeline integration

- Privacy controls

Pros

- Strong AI focus

- Good integration

Cons

- Limited standalone features

- Requires setup

Platforms / Deployment

Cloud

Security & Compliance

Privacy-preserving tools

Compliance: Not publicly stated

Integrations & Ecosystem

- ML frameworks

- Data pipelines

Support & Community

Growing ecosystem.

#10 — Synthesized

Short description :

Synthesized is a synthetic data platform focused on privacy-safe data generation. It creates realistic datasets for testing and ML training. Designed for enterprise and regulated industries. Focuses on data compliance and governance.

Key Features

- Synthetic data generation

- Privacy preservation

- Data validation

- Enterprise APIs

Pros

- Strong compliance focus

- High-quality data

Cons

- Smaller ecosystem

- Enterprise pricing

Platforms / Deployment

Cloud

Security & Compliance

Privacy-first architecture

Compliance: Varies

Integrations & Ecosystem

- Data systems

- ML pipelines

Support & Community

Enterprise support.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Mostly AI | Enterprise | Cloud | Hybrid | Privacy-safe data | N/A |

| Gretel AI | Developers | Cloud | Cloud | APIs | N/A |

| Hazy | Finance | Multi | Hybrid | Governance | N/A |

| Tonic.ai | Dev teams | Multi | Hybrid | Data masking | N/A |

| Synthesis AI | Vision AI | Cloud | Cloud | Image generation | N/A |

| DataGen | Enterprise | Cloud | Cloud | Compliance | N/A |

| MDClone | Healthcare | Multi | Hybrid | Medical data | N/A |

| Mostly AI (Alt) | Finance | Cloud | Cloud | Accuracy | N/A |

| YData | AI teams | Cloud | Cloud | Data quality | N/A |

| Synthesized | Enterprise | Cloud | Cloud | Privacy focus | N/A |

Evaluation & Scoring of Synthetic Data Tools

| Tool | Core | Ease | Integration | Security | Performance | Support | Value | Total |

|---|---|---|---|---|---|---|---|---|

| Mostly AI | 10 | 7 | 9 | 10 | 9 | 9 | 7 | 8.7 |

| Gretel AI | 9 | 9 | 9 | 9 | 9 | 8 | 8 | 8.7 |

| Hazy | 10 | 7 | 8 | 10 | 9 | 9 | 6 | 8.4 |

| Tonic.ai | 9 | 9 | 8 | 9 | 8 | 8 | 8 | 8.4 |

| Synthesis AI | 9 | 7 | 8 | 8 | 9 | 8 | 7 | 8.0 |

| DataGen | 8 | 7 | 8 | 9 | 8 | 8 | 7 | 7.9 |

| MDClone | 9 | 8 | 8 | 10 | 9 | 9 | 6 | 8.4 |

| YData | 8 | 8 | 8 | 8 | 8 | 8 | 8 | 8.0 |

| Synthesized | 9 | 8 | 8 | 9 | 8 | 8 | 7 | 8.3 |

| Alt Mostly AI | 10 | 7 | 9 | 10 | 9 | 9 | 7 | 8.7 |

Which Synthetic Data Tool Is Right for You?

Solo / Freelancer

Use Gretel AI, YData

SMB

Use Tonic.ai, YData

Mid-Market

Use Hazy, Synthesized

Enterprise

Use Mostly AI, MDClone, DataGen

Budget vs Premium

Budget: Gretel AI

Premium: Hazy, Mostly AI

Feature Depth vs Ease

Depth: Hazy

Ease: Gretel AI

Security & Compliance

Best: MDClone, Mostly AI

Frequently Asked Questions (FAQs)

1. What is synthetic data?

Synthetic data is artificially generated data that mimics real-world datasets. It preserves statistical patterns without using real sensitive information. It is used for AI training and testing. Helps with privacy compliance. Widely used in machine learning.

2. Why use synthetic data tools?

They help solve data privacy issues and reduce dependency on real datasets. Improve model training diversity. Enable safe testing environments. Reduce compliance risks. Support scalable AI development.

3. Is synthetic data accurate?

Yes, modern tools generate highly realistic data. Accuracy depends on the platform. Advanced AI models improve quality. Some edge cases may differ. Validation tools help ensure reliability.

4. Is synthetic data legal?

Yes, because it does not contain real personal data. It helps comply with privacy laws. Widely used in regulated industries. Must still follow governance policies. Depends on implementation.

5. Can synthetic data replace real data?

Not completely. It complements real data. Useful for training and testing. Real data is still needed for validation. Hybrid approach works best.

6. Are synthetic data tools expensive?

Open-source tools are free. Enterprise platforms are paid. Pricing varies by usage. Cloud tools follow subscription models. Costs depend on scale.

7. Can synthetic data be used for AI training?

Yes, it is widely used for training ML models. Helps improve dataset diversity. Reduces bias in models. Common in computer vision and NLP. Supports safe experimentation.

8. What industries use synthetic data?

Finance, healthcare, retail, cybersecurity, and automotive industries. Used wherever data privacy is important. Also used in AI research. Growing adoption across enterprises.

9. Are synthetic data tools secure?

Yes, enterprise platforms include privacy protection. They use anonymization and encryption. Security depends on implementation. Compliance varies by vendor. Always evaluate governance features.

10. What are limitations of synthetic data?

It may not capture all real-world complexity. Requires careful validation. Quality depends on generation models. Not a full replacement for real data. Best used alongside real datasets.

Conclusion

Synthetic data generation tools are transforming how organizations build and train AI models by enabling privacy-safe, scalable, and compliant data usage. They are becoming essential in industries where real data is sensitive or limited, offering a powerful alternative for innovation without compromising security.

The right tool depends on your industry, data complexity, and compliance needs. While enterprise platforms like Mostly AI and Hazy offer strong governance and accuracy, developer-friendly tools like Gretel AI provide flexibility and speed. The best approach is to evaluate multiple tools, test them with real workflows, and choose based on scalability, privacy, and integration requirements.