Introduction

Model Monitoring & Drift Detection Tools are essential components of modern machine learning systems that ensure models continue to perform accurately after deployment. Once a model goes live, real-world data can change over time, leading to performance degradation. These tools continuously track model behavior, detect drift, and trigger alerts or retraining workflows.

In today’s AI-driven ecosystem, deploying a model is just the beginning. Organizations must ensure models remain reliable, unbiased, and compliant with regulations. With the rise of MLOps, AI governance, and real-time decision systems, monitoring tools have become critical for maintaining trust in machine learning outcomes.

Common use cases include:

- Detecting data drift and concept drift

- Monitoring prediction accuracy and latency

- Identifying bias and fairness issues

- Ensuring regulatory compliance and auditability

- Triggering automated retraining workflows

Key evaluation criteria buyers should consider:

- Drift detection accuracy and coverage

- Real-time vs batch monitoring capabilities

- Integration with ML pipelines and tools

- Alerting and automation features

- Explainability and observability

- Scalability and performance

- Security and compliance readiness

- Ease of deployment and usability

Best for: ML engineers, data scientists, MLOps teams, and enterprises deploying production-grade AI systems.

Not ideal for: Teams not yet operationalizing models or those working only with static analytics datasets.

Key Trends in Model Monitoring & Drift Detection Tools

- AI-powered anomaly detection: Automated identification of drift and irregular patterns

- Unified ML observability: Combining logs, metrics, and model insights in one platform

- Real-time monitoring: Increasing adoption for low-latency use cases

- Explainability integration: Understanding model decisions alongside performance

- Bias and fairness tracking: Ethical AI becoming a standard requirement

- Cloud-native platforms: Managed services gaining popularity

- MLOps integration: Seamless connection with CI/CD and deployment pipelines

- Hybrid monitoring models: Support for both batch and streaming data

How We Evaluated Model Monitoring & Drift Detection Tools (Methodology)

- Industry adoption and enterprise relevance

- Depth of monitoring and drift detection capabilities

- Performance and scalability benchmarks

- Security and compliance features

- Integration with modern ML ecosystems

- Ease of use and onboarding experience

- Community strength and vendor support

- Cost-effectiveness and deployment flexibility

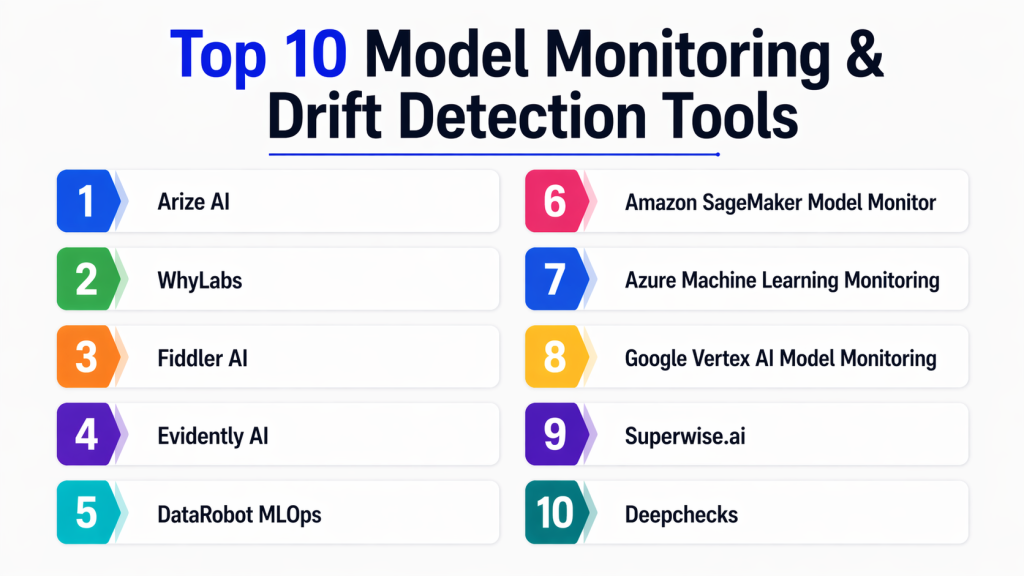

Top 10 Model Monitoring & Drift Detection Tools

#1 — Arize AI

Short description:

Arize AI is a leading ML observability platform designed to monitor, debug, and improve machine learning models in production. It provides deep insights into model performance, helping teams quickly identify issues such as drift, anomalies, and data quality problems. It is widely used by organizations that need real-time visibility into AI systems and want to ensure model reliability at scale.

Key Features

- Data and concept drift detection

- Model performance monitoring

- Root cause analysis tools

- Real-time observability dashboards

- Alerting and anomaly detection

- Data quality monitoring

Pros

- Strong debugging and visualization capabilities

- Scales well for enterprise workloads

Cons

- Requires integration effort

- Premium pricing for advanced features

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, RBAC, audit logging

Integrations & Ecosystem

Arize integrates with modern ML and data platforms to provide end-to-end observability.

- Data warehouses and data lakes

- Python-based ML pipelines

- APIs and monitoring systems

Support & Community

Enterprise-grade support with growing adoption and strong documentation.

#2 — WhyLabs

Short description:

WhyLabs is a model monitoring platform focused on data observability and drift detection. It helps teams detect anomalies early, monitor model inputs and outputs, and maintain data quality across pipelines. It is particularly useful for organizations looking to build reliable and transparent ML systems with minimal overhead.

Key Features

- Data drift detection

- Model performance tracking

- Data profiling and validation

- Alerting and anomaly detection

- Integration with open-source tools

Pros

- Easy to implement and integrate

- Strong focus on data quality

Cons

- Limited advanced customization

- Pricing not always transparent

Platforms / Deployment

- Cloud

Security & Compliance

- Encryption, access control

Integrations & Ecosystem

- Python libraries

- Data pipelines

- APIs

Support & Community

Growing community with solid documentation and support.

#3 — Fiddler AI

Short description:

Fiddler AI is an enterprise platform focused on explainable AI and model monitoring. It helps teams understand model decisions, detect drift, and ensure fairness. It is particularly valuable for industries that require transparency and regulatory compliance.

Key Features

- Explainable AI insights

- Drift and bias detection

- Model performance tracking

- Debugging tools

- Compliance monitoring

Pros

- Strong explainability capabilities

- Enterprise-ready features

Cons

- Complex setup

- Higher cost

Platforms / Deployment

- Cloud / On-premise

Security & Compliance

- Enterprise-grade security features

Integrations & Ecosystem

- ML frameworks

- Data pipelines

- APIs

Support & Community

Enterprise-level support.

#4 — Evidently AI

Short description:

Evidently AI is an open-source tool for monitoring machine learning models and detecting data drift. It provides detailed reports and visualizations that help teams understand how their models perform over time. It is ideal for teams looking for a flexible and cost-effective monitoring solution.

Key Features

- Data drift detection

- Model monitoring dashboards

- Custom metrics and reports

- Visualization tools

- Open-source flexibility

Pros

- Free and customizable

- Strong community support

Cons

- Requires technical setup

- Limited enterprise features

Platforms / Deployment

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python

- Jupyter notebooks

- ML pipelines

Support & Community

Active open-source community.

#5 — DataRobot MLOps

Short description:

DataRobot MLOps is an enterprise-grade solution for managing and monitoring machine learning models. It provides tools for tracking model performance, detecting drift, and ensuring governance across the ML lifecycle.

Key Features

- Model performance monitoring

- Drift detection

- Governance and compliance tools

- Deployment tracking

- Alerting and reporting

Pros

- End-to-end lifecycle support

- Strong governance capabilities

Cons

- Expensive

- Best suited for DataRobot users

Platforms / Deployment

- Cloud / On-premise

Security & Compliance

- Enterprise compliance features

Integrations & Ecosystem

- APIs

- Data pipelines

- ML platforms

Support & Community

Enterprise-grade support.

#6 — Amazon SageMaker Model Monitor

Short description:

SageMaker Model Monitor is a monitoring tool within AWS that tracks model performance and detects drift in production environments. It is ideal for organizations already using AWS for ML workloads.

Key Features

- Data drift detection

- Monitoring dashboards

- Automated alerts

- Integration with SageMaker

- Data quality tracking

Pros

- Seamless AWS integration

- Scalable infrastructure

Cons

- Limited outside AWS

- AWS dependency

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption

Integrations & Ecosystem

- S3

- Lambda

- AWS analytics tools

Support & Community

Strong AWS support.

#7 — Azure ML Monitoring

Short description:

Azure ML Monitoring provides tools for tracking model performance and detecting drift within the Microsoft ecosystem. It is designed for enterprise-scale AI deployments.

Key Features

- Drift detection

- Model performance metrics

- Alerting system

- Integration with Azure ML

- Monitoring dashboards

Pros

- Strong enterprise integration

- Scalable and reliable

Cons

- Azure dependency

- Setup complexity

Platforms / Deployment

- Cloud

Security & Compliance

- Azure AD, encryption

Integrations & Ecosystem

- Azure services

- Data pipelines

Support & Community

Enterprise support.

#8 — Google Vertex AI Model Monitoring

Short description:

Vertex AI Model Monitoring helps track model performance and detect drift for models deployed on Google Cloud. It provides automated insights and alerts.

Key Features

- Drift detection

- Monitoring dashboards

- Alerts and notifications

- Integration with Vertex AI

- Automated analysis

Pros

- Easy GCP integration

- Scalable

Cons

- GCP dependency

- Pricing complexity

Platforms / Deployment

- Cloud

Security & Compliance

- IAM, encryption

Integrations & Ecosystem

- BigQuery

- Cloud AI tools

Support & Community

Strong cloud support.

#9 — Superwise.ai

Short description:

Superwise.ai is a specialized platform for monitoring machine learning models and detecting anomalies in real time. It focuses on observability and alerting.

Key Features

- Real-time monitoring

- Drift detection

- Alerts and notifications

- Performance tracking

- Observability tools

Pros

- Specialized monitoring focus

- Easy deployment

Cons

- Smaller ecosystem

- Premium pricing

Platforms / Deployment

- Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- APIs

- ML tools

Support & Community

Growing support ecosystem.

#10 — Deepchecks

Short description:

Deepchecks is a validation and monitoring tool that helps ensure model quality and detect issues like drift and data inconsistencies. It is available as both open-source and enterprise versions.

Key Features

- Drift detection

- Model validation

- Data quality checks

- Testing framework

- Visualization tools

Pros

- Strong validation capabilities

- Open-source option

Cons

- Requires technical expertise

- Limited enterprise features

Platforms / Deployment

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python

- ML frameworks

Support & Community

Active community support.

Comparison Table (Top 10)

| Tool | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Arize AI | ML observability | Web | Cloud | Root cause analysis | N/A |

| WhyLabs | Data observability | Web | Cloud | Data profiling | N/A |

| Fiddler AI | Explainability | Web | Hybrid | Bias detection | N/A |

| Evidently AI | Open-source | Python | Self-hosted | Drift reports | N/A |

| DataRobot MLOps | Enterprise AI | Web | Hybrid | Governance | N/A |

| SageMaker Monitor | AWS users | Web | Cloud | Native monitoring | N/A |

| Azure ML Monitoring | Azure users | Web | Cloud | Integration | N/A |

| Vertex AI Monitoring | GCP users | Web | Cloud | Auto insights | N/A |

| Superwise.ai | Real-time monitoring | Web | Cloud | Alerts | N/A |

| Deepchecks | Validation | Python | Hybrid | Testing framework | N/A |

Evaluation & Scoring of Model Monitoring & Drift Detection Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Arize AI | 9 | 7 | 8 | 8 | 9 | 8 | 7 | 8.1 |

| WhyLabs | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| Fiddler AI | 8 | 6 | 7 | 9 | 8 | 7 | 6 | 7.5 |

| DataRobot MLOps | 9 | 7 | 8 | 9 | 8 | 8 | 6 | 8.0 |

| SageMaker Monitor | 8 | 7 | 9 | 9 | 8 | 8 | 7 | 8.1 |

| Azure ML Monitoring | 8 | 7 | 8 | 9 | 8 | 8 | 7 | 8.0 |

| Vertex AI Monitoring | 8 | 7 | 8 | 9 | 8 | 8 | 7 | 8.0 |

| Superwise.ai | 7 | 8 | 6 | 6 | 7 | 6 | 7 | 6.9 |

| Evidently AI | 7 | 7 | 6 | 6 | 7 | 7 | 9 | 7.2 |

| Deepchecks | 7 | 7 | 6 | 6 | 7 | 7 | 8 | 7.1 |

Score interpretation:

These scores are comparative and based on weighted evaluation criteria. A higher score reflects a more balanced tool across enterprise needs, usability, and scalability. However, the best tool depends on your specific environment, data complexity, and monitoring requirements.

Which Model Monitoring & Drift Detection Tool Is Right for You?

Solo / Freelancer

- Evidently AI, Deepchecks

SMB

- WhyLabs, Superwise.ai

Mid-Market

- Arize AI, Fiddler AI

Enterprise

- DataRobot MLOps, SageMaker Monitor, Vertex AI Monitoring

Budget vs Premium

- Budget: Evidently AI

- Premium: DataRobot, Fiddler AI

Feature Depth vs Ease of Use

- Deep features: Arize AI, DataRobot

- Easy to use: WhyLabs

Integrations & Scalability

- Best integrations: SageMaker Monitor, Vertex AI

Security & Compliance Needs

- Strongest: Azure ML Monitoring, DataRobot

Frequently Asked Questions (FAQs)

1. What is model drift?

Model drift refers to changes in data patterns over time that cause machine learning models to lose accuracy. It can result from shifting user behavior, market changes, or evolving datasets. Detecting drift early helps maintain model performance.

2. Why is model monitoring important?

Model monitoring ensures that deployed models remain accurate and reliable. Without monitoring, models can degrade silently, leading to incorrect predictions and business risks. It also supports compliance and governance.

3. What types of drift exist?

There are two main types: data drift and concept drift. Data drift occurs when input data changes, while concept drift happens when relationships between inputs and outputs change.

4. Can these tools handle real-time monitoring?

Yes, many tools support both batch and real-time monitoring. Real-time monitoring is essential for applications like fraud detection and recommendation systems.

5. Are open-source tools reliable?

Open-source tools like Evidently AI are reliable and widely used. However, they may lack enterprise features like governance and compliance.

6. How do monitoring tools detect anomalies?

They use statistical techniques and machine learning algorithms to identify unusual patterns in data or predictions. Alerts are triggered when thresholds are exceeded.

7. Do these tools support explainability?

Yes, many tools include explainability features to help users understand model decisions and improve trust in AI systems.

8. What industries use these tools?

Industries like finance, healthcare, retail, and telecom rely on these tools to ensure AI accuracy and compliance.

9. How do I choose the right tool?

Evaluate tools based on scalability, integration, ease of use, and cost. Choose one that fits your infrastructure and monitoring needs.

10. Is implementation difficult?

Implementation complexity varies. Cloud-based tools are easier to deploy, while open-source tools require more setup.

Conclusion

Model monitoring and drift detection tools are no longer optional—they are essential for any organization running machine learning models in production. These tools provide visibility into model performance, detect issues early, and help maintain reliability, fairness, and compliance. As AI systems become more complex, continuous monitoring ensures that models remain aligned with real-world data and business goals.

The right tool depends on your organization’s scale, infrastructure, and expertise. Enterprise platforms offer robust governance and scalability, while open-source tools provide flexibility and cost advantages. The best approach is to shortlist a few tools, run pilot implementations, and validate how well they integrate with your workflows before making a final decision.