Introduction

Data Quality Tools help organizations ensure that their data is accurate, consistent, complete, and reliable across all systems. They automate validation, standardization, and cleansing of data to improve decision-making, regulatory compliance, and operational efficiency. With modern AI-driven analytics and complex data pipelines, maintaining high-quality data is more critical than ever.

Real-world use cases include detecting duplicate records, standardizing customer and product data, cleansing legacy datasets, monitoring data pipelines for anomalies, and supporting governance and regulatory reporting. Buyers should evaluate functionality such as automated profiling, cleansing, validation, monitoring, governance, integration with other systems, scalability, AI/ML-assisted quality detection, ease of use, and cost efficiency.

Best for: data stewards, data engineers, analytics teams, compliance teams, and enterprises with large or complex datasets.

Not ideal for: small teams with limited data, organizations without structured data processes, or companies using only a single application with minimal integration needs.

Key Trends in Data Quality Tools

- AI-assisted anomaly detection and cleansing

- Real-time data quality monitoring for streaming and batch pipelines

- Cloud-native and hybrid deployment options

- Integration with governance platforms and metadata management

- Automated profiling and rule-based validation

- Data lineage and audit trail support for compliance

- Self-service data quality dashboards for business users

- Emphasis on data standardization and enrichment

How We Evaluate Data Quality Tools (Methodology)

- Market adoption and customer feedback

- Feature completeness including profiling, cleansing, monitoring, and reporting

- Reliability and performance under high-volume data workloads

- Security and compliance posture

- Integration with other data platforms and ecosystems

- Scalability across on-prem, cloud, and hybrid environments

- AI and automation capabilities

- Ease of use for technical and business users

- Support and community strength

- Pricing and total cost of ownership

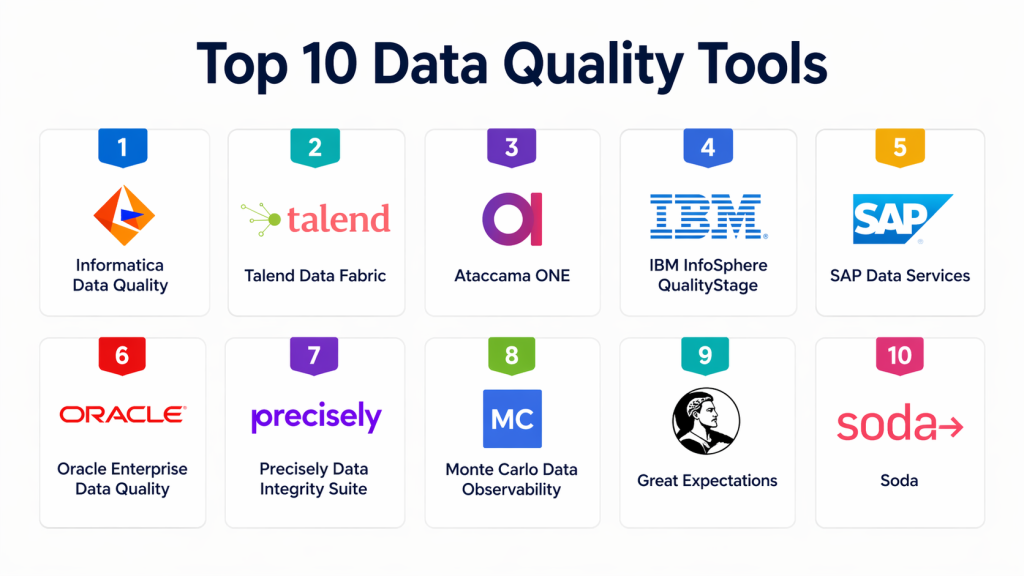

Top 10 Data Quality Tools

#1 — Informatica Data Quality

Short description : Informatica Data Quality is a comprehensive enterprise-grade solution that supports profiling, cleansing, monitoring, and governance of structured and unstructured data. It is highly suitable for large enterprises managing multi-source and multi-domain data.

Key Features

- Data profiling and monitoring

- Standardization and cleansing

- Rule-based validation

- Address verification and enrichment

- Data stewardship workflows

- Reporting and analytics

- Multi-domain support

Pros

- Robust enterprise features

- Strong governance integration

- Scalable for high-volume environments

Cons

- Complex deployment

- Higher cost for smaller teams

- Requires training for advanced features

Platforms / Deployment

- Web / Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

SSO/SAML, RBAC, encryption. SOC 2 and GDPR compliance supported.

Integrations & Ecosystem

Integrates with data warehouses, ETL platforms, MDM tools, and BI tools.

- API connectivity

- Hadoop and cloud platform integration

- Metadata management support

Support & Community

Strong documentation and enterprise support; active user community.

#2 — Talend Data Quality

Short description : Talend Data Quality ensures data is accurate, complete, and consistent. It combines profiling, cleansing, and monitoring capabilities within Talend Data Fabric for enterprise integration.

Key Features

- Data profiling and validation

- Automated cleansing and standardization

- Duplicate detection

- Data enrichment

- Rule-based monitoring

- Visual dashboards

- Multi-cloud support

Pros

- Flexible deployment

- Open-source components available

- Good for multi-cloud environments

Cons

- Learning curve for beginners

- Can be resource-intensive

- Requires integration with Talend platform for full features

Platforms / Deployment

- Web / Linux / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

Supports encryption, RBAC, and audit logging. GDPR compliance supported.

Integrations & Ecosystem

Integrates with MDM, ETL tools, cloud platforms, and BI solutions.

- API connectivity

- Data lakes and warehouses

- Metadata and lineage support

Support & Community

Comprehensive documentation, active support, and community forums.

#3 — Ataccama ONE

Short description : Ataccama ONE provides AI-powered data quality management with profiling, cleansing, and monitoring. It supports data governance and master data management within a unified platform.

Key Features

- AI-based anomaly detection

- Profiling and cleansing

- Data standardization

- Duplicate management

- Real-time monitoring

- Workflow automation

- Integration with MDM and governance platforms

Pros

- AI-assisted automation

- Unified platform for governance and quality

- Strong analytics and reporting

Cons

- Enterprise-oriented pricing

- Implementation complexity

- Requires skilled users for configuration

Platforms / Deployment

- Web / Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

SSO, encryption, audit logging. GDPR and SOC 2 compliance supported.

Integrations & Ecosystem

Integrates with BI tools, ETL platforms, MDM systems, and cloud data lakes.

- API connectivity

- Real-time dashboards

- Cloud and hybrid integrations

Support & Community

Strong enterprise support, detailed documentation, and active user community.

#4 — IBM InfoSphere QualityStage

Short description : IBM InfoSphere QualityStage focuses on data standardization, cleansing, and matching. It is suitable for organizations needing accurate customer, product, or reference data across systems.

Key Features

- Address cleansing and verification

- Name and entity standardization

- Duplicate detection and merging

- Data enrichment

- Batch and real-time validation

- Multi-domain support

- Data profiling

Pros

- Strong for customer and product data

- Enterprise-grade reliability

- Scalable for high-volume workloads

Cons

- Complex configuration

- Higher learning curve

- Costs can be significant

Platforms / Deployment

- Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, SSO, audit logs. SOC 2 and GDPR compliance.

Integrations & Ecosystem

Integrates with MDM, ETL platforms, and ERP systems.

- API support

- Data lakes and warehouse integration

- Governance frameworks

Support & Community

Enterprise support available; documentation is comprehensive.

#5 — SAP Data Services

Short description : SAP Data Services provides data quality, integration, and profiling capabilities for SAP and non-SAP environments. It is designed for enterprise-scale ETL and quality processes.

Key Features

- Data profiling and cleansing

- Standardization and validation

- Duplicate detection

- Data enrichment

- Workflow automation

- Batch and real-time processing

- Integration with SAP ecosystem

Pros

- Strong SAP integration

- Enterprise scalability

- Supports multi-domain data

Cons

- Complex deployment

- Requires SAP expertise

- Licensing cost can be high

Platforms / Deployment

- Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

Supports SSO, encryption, and auditing. GDPR and SOC 2 compliance.

Integrations & Ecosystem

Integrates with SAP systems, ETL platforms, and BI tools.

- API support

- Data warehouse connectivity

- Governance integration

Support & Community

Enterprise support is strong; documentation available; community moderate.

#6 — Oracle Enterprise Data Quality

Short description : Oracle Enterprise Data Quality ensures high-quality, consistent data across enterprise systems. It provides profiling, cleansing, and monitoring with integration into Oracle ecosystems.

Key Features

- Data profiling and cleansing

- Standardization and matching

- Monitoring and validation

- Duplicate management

- Data enrichment

- Workflow and reporting

- Multi-domain support

Pros

- Strong for Oracle environments

- Enterprise reliability

- Comprehensive feature set

Cons

- Best suited for Oracle-heavy landscapes

- Higher cost for smaller deployments

- Complexity for initial setup

Platforms / Deployment

- Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

Supports SSO, RBAC, and encryption. GDPR and SOC 2 compliant.

Integrations & Ecosystem

Integrates with Oracle databases, ERP, BI tools, and MDM systems.

- API support

- Data lake and warehouse connectivity

- Governance frameworks

Support & Community

Strong enterprise support and documentation; community active within Oracle users.

#7 — Precisely Data Integrity Suite

Short description : Precisely Data Integrity Suite focuses on data validation, profiling, and cleansing. It is suitable for enterprises needing high accuracy in customer, product, or reference data.

Key Features

- Data profiling and validation

- Address verification

- Duplicate detection

- Data standardization

- Data enrichment

- Real-time monitoring

- Reporting dashboards

Pros

- Strong accuracy for reference data

- Enterprise-grade features

- Good real-time monitoring

Cons

- Complex configuration

- Enterprise pricing

- Learning curve for new users

Platforms / Deployment

- Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

Supports SSO, RBAC, and audit logs. GDPR and SOC 2 compliant.

Integrations & Ecosystem

Works with MDM systems, BI platforms, and ERP applications.

- API connectivity

- Integration with ETL workflows

- Data lakes and warehouse support

Support & Community

Enterprise support available; documentation comprehensive; community moderate.

#8 — Monte Carlo Data Observability

Short description : Monte Carlo focuses on data reliability and observability. It monitors pipelines, detects anomalies, and ensures trust in data feeding analytics and ML models.

Key Features

- Automated data monitoring

- Anomaly detection

- Root cause analysis

- Pipeline health dashboards

- SLA tracking

- Alerts and notifications

- Integration with warehouses and lakes

Pros

- Strong for pipeline observability

- Proactive anomaly detection

- Good integration with modern warehouses

Cons

- Limited cleansing capabilities

- More monitoring-focused than full quality suite

- Enterprise cost may be high

Platforms / Deployment

- Web / Cloud

- Cloud

Security & Compliance

Supports SSO and secure integration. SOC 2 compliance supported.

Integrations & Ecosystem

Connects with Snowflake, Redshift, BigQuery, and other warehouses.

- Pipeline monitoring integration

- Data quality alerts

- Analytics platform alignment

Support & Community

Strong support; growing community; documentation comprehensive.

#9 — Great Expectations

Short description : Great Expectations is an open-source data quality framework that helps teams build and automate data validation and profiling pipelines. It is ideal for modern data engineering environments.

Key Features

- Data profiling

- Validation rules

- Testing frameworks for pipelines

- Documentation and data expectations

- Automated monitoring

- Open-source integration

- Supports batch and streaming data

Pros

- Open-source and flexible

- Strong integration with modern data stacks

- Lightweight and developer-friendly

Cons

- Requires technical expertise

- Enterprise support is limited

- Less suitable for non-technical users

Platforms / Deployment

- Web / Linux

- Self-hosted / Cloud

Security & Compliance

Security depends on deployment; RBAC and audit logging configurable.

Integrations & Ecosystem

Integrates with Snowflake, Redshift, BigQuery, dbt, and Spark.

- Open-source ecosystem

- Pipeline integration

- Customizable validations

Support & Community

Active open-source community; documentation and tutorials available.

#10 — Soda

Short description : Soda provides modern data quality monitoring and observability. It validates data, tracks metrics, and alerts teams to quality issues across warehouses, lakes, and pipelines.

Key Features

- Data validation and monitoring

- Metric-based anomaly detection

- Alerts and notifications

- Integration with modern warehouses

- Dashboard visualization

- Automated testing workflows

- Pipeline observability

Pros

- Lightweight and cloud-friendly

- Good for real-time monitoring

- Flexible and developer-friendly

Cons

- Not a full ETL tool

- Limited cleansing capabilities

- Requires technical setup for complex pipelines

Platforms / Deployment

- Web / Cloud

- Cloud

Security & Compliance

Supports RBAC and secure API integration; SOC 2 compliant.

Integrations & Ecosystem

Integrates with Snowflake, BigQuery, Redshift, and dbt.

- Pipeline observability integration

- Metrics dashboards

- Real-time monitoring

Support & Community

Growing support community; good documentation; commercial support available.

Comparison Table (Top 10)

| Tool Name | Best For | Platforms Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Informatica Data Quality | Enterprise data governance | Web / Windows / Linux | Cloud / Self-hosted / Hybrid | Broad enterprise features | N/A |

| Talend Data Fabric | Multi-cloud integration | Web / Linux / Windows | Cloud / Self-hosted / Hybrid | Integration plus quality and governance | N/A |

| Ataccama ONE | AI-powered quality and governance | Web / Windows / Linux | Cloud / Self-hosted / Hybrid | AI-assisted anomaly detection | N/A |

| IBM InfoSphere QualityStage | Customer/product reference data | Windows / Linux | Cloud / Self-hosted / Hybrid | Strong address verification | N/A |

| SAP Data Services | Enterprise SAP environments | Windows / Linux | Cloud / Self-hosted / Hybrid | Batch/real-time ETL integration | N/A |

| Oracle Enterprise Data Quality | Oracle-heavy environments | Windows / Linux | Cloud / Self-hosted / Hybrid | Multi-domain support | N/A |

| Precisely Data Integrity Suite | Reference data accuracy | Windows / Linux | Cloud / Self-hosted / Hybrid | Real-time monitoring | N/A |

| Monte Carlo Data Observability | Pipeline monitoring | Web / Cloud | Cloud | Automated anomaly detection | N/A |

| Great Expectations | Open-source validation | Web / Linux | Self-hosted / Cloud | Flexible validation framework | N/A |

| Soda | Modern observability and metrics | Web / Cloud | Cloud | Real-time data quality metrics | N/A |

Evaluation & Scoring of Data Quality Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Informatica Data Quality | 9.5 | 7.5 | 9.2 | 9.0 | 8.8 | 8.8 | 7.5 | 8.63 |

| Talend Data Fabric | 9.0 | 8.0 | 8.8 | 8.8 | 8.5 | 8.5 | 7.8 | 8.44 |

| Ataccama ONE | 8.8 | 7.8 | 8.5 | 8.6 | 8.4 | 8.3 | 7.9 | 8.34 |

| IBM InfoSphere QualityStage | 8.7 | 6.8 | 8.0 | 8.7 | 8.5 | 8.4 | 7.2 | 8.03 |

| SAP Data Services | 8.5 | 7.5 | 8.2 | 8.4 | 8.3 | 8.2 | 7.5 | 8.11 |

| Oracle Enterprise Data Quality | 8.7 | 7.0 | 8.3 | 8.6 | 8.2 | 8.3 | 7.2 | 8.01 |

| Precisely Data Integrity Suite | 8.4 | 7.5 | 8.0 | 8.3 | 8.1 | 8.1 | 7.3 | 7.97 |

| Monte Carlo Data Observability | 8.2 | 8.2 | 7.8 | 8.0 | 8.0 | 8.0 | 7.5 | 7.98 |

| Great Expectations | 8.0 | 7.8 | 7.5 | 7.8 | 7.9 | 7.5 | 8.2 | 7.85 |

| Soda | 7.8 | 8.0 | 7.4 | 7.8 | 7.8 | 7.6 | 8.0 | 7.84 |

Which Data Quality Tool Is Right for You?

Solo / Freelancer

For individual developers or small teams, Great Expectations or Soda is approachable, lightweight, and open-source-friendly.

SMB

For mid-sized companies, Airbyte combined with data validation frameworks like Soda or Great Expectations can balance flexibility and cost.

Mid-Market

Talend Data Fabric, Ataccama ONE, or Monte Carlo provide governance, monitoring, and broader data quality automation.

Enterprise

Informatica Data Quality, IBM InfoSphere, SAP Data Services, and Oracle Enterprise Data Quality are strong candidates for large-scale, multi-domain, regulated environments.

Budget vs Premium

Open-source or SaaS-oriented tools offer cost-effective options, while enterprise-grade platforms justify higher costs with advanced features and governance.

Feature Depth vs Ease of Use

Platforms like Informatica and Talend have depth but higher learning curves, while Soda and Great Expectations prioritize usability for developers.

Integrations & Scalability

Choose tools that support your existing pipelines, warehouses, and lakes. Enterprise platforms often excel at large-scale deployments.

Security & Compliance Needs

Ensure the platform supports audit logging, access control, encryption, and regulatory requirements like GDPR or SOC 2.

Frequently Asked Questions (FAQs)

1. What is a Data Quality Tool?

A Data Quality Tool helps organizations automatically check, clean, and monitor data to ensure it is accurate, consistent, and usable. These tools reduce manual effort and prevent errors in analytics and reporting. They are commonly used in data pipelines, warehouses, and business applications. By enforcing rules and validations, they improve trust in data. They are essential for modern data-driven organizations.

2. Why is data quality important?

Data quality directly impacts business decisions, analytics accuracy, and compliance. Poor-quality data can lead to incorrect insights, operational inefficiencies, and financial losses. It also affects customer experience and reporting reliability. High-quality data ensures better forecasting and decision-making. It is critical for AI, ML, and automation workflows.

3. Can these tools work in cloud environments?

Yes, most modern data quality tools are designed for cloud, hybrid, and on-prem environments. They integrate with cloud warehouses, data lakes, and SaaS applications. Cloud-native tools offer scalability and real-time monitoring. Hybrid deployment is useful for enterprises with legacy systems. Flexibility in deployment is a key factor when selecting tools.

4. Do I need an enterprise tool for small datasets?

Not always. Small teams can use lightweight or open-source tools for basic validation and monitoring. Enterprise tools are more suitable for complex, multi-source environments. Choosing the right tool depends on data volume, complexity, and governance needs. Over-investing in large platforms can increase cost without added value. Start small and scale as needed.

5. Are Data Quality Tools compatible with ETL pipelines?

Yes, most tools integrate directly with ETL and ELT pipelines. They validate data before, during, or after transformation processes. This ensures clean data flows across systems. Integration helps maintain consistency across analytics and reporting layers. Many tools also support real-time pipeline monitoring.

6. How do AI features improve data quality?

AI helps detect anomalies, identify duplicates, and suggest data corrections automatically. It reduces manual rule creation and improves accuracy over time. Machine learning models can predict potential data issues before they occur. AI-driven insights also help prioritize critical data problems. This makes data quality processes more efficient and scalable.

7. Is real-time monitoring necessary?

Real-time monitoring is important for organizations with streaming data or mission-critical applications. It helps detect issues immediately and prevent downstream impact. For batch-based systems, scheduled monitoring may be sufficient. The need depends on business requirements and data usage patterns. Many modern tools support both approaches.

8. What are the common challenges in data quality management?

Common challenges include data silos, inconsistent formats, duplicate records, and lack of governance. Managing large volumes of data across systems can also be complex. Poor documentation and unclear ownership add to the problem. Tools help address these challenges but require proper implementation. Organizational alignment is equally important.

9. Can one tool handle all data quality needs?

Some enterprise tools provide end-to-end capabilities, but many organizations use multiple tools. One tool may handle profiling while another focuses on monitoring or governance. The choice depends on architecture and business requirements. A unified platform is easier to manage but may be expensive. A modular approach offers flexibility.

10. How should I choose the right Data Quality Tool?

Start by identifying your data sources, volume, and complexity. Evaluate tools based on integration, scalability, security, and ease of use. Consider whether you need real-time monitoring, AI capabilities, or governance features. Test a few tools with real datasets before deciding. The best choice depends on your specific use case and long-term strategy.

Conclusion

Data Quality Tools play a critical role in ensuring that organizations can trust their data for analytics, operations, and decision-making. From enterprise platforms like Informatica and Talend to modern observability tools like Monte Carlo and Soda, the market offers a wide range of solutions tailored to different needs. Choosing the right tool depends on factors such as data complexity, integration requirements, scalability, and governance expectations. Organizations must balance ease of use with feature depth to get the most value from their investment.

Ultimately, there is no single “best” tool for every scenario. The right approach is to shortlist two or three tools that align with your data architecture and business goals, then test them in real-world conditions. Focus on integration capabilities, data accuracy improvements, and operational efficiency during evaluation. By doing this, you can ensure that your chosen solution supports long-term data reliability and growth.